Discover how AI is being introduced into schools worldwide, opening exciting new opportunities for learning and innovation.

In a fifth-grade classroom, a student raises her hand and asks, “Is it cheating if I ask the computer for help?” The room goes quiet. The teacher hesitates. Half the class has already experimented with ChatGPT at home. The other half doesn’t have devices or permission to try. One child is bursting with ideas, another is worried about getting in trouble, and the adult in the room is balancing curiosity against policy, innovation against uncertainty.

That moment is playing out in schools and kitchens everywhere. Parents wonder whether AI will help their children think more deeply or think less. Teachers worry about safety, fairness, and losing control of learning. Students feel both empowered and anxious, able to do more than ever, yet unsure where the boundaries lie.

This is the crossroads we stand at: speed versus safety, curiosity versus fear, access versus inequity. Around the world, some education systems have chosen not to look away. Instead, they are teaching AI as part of the curriculum, treating it as a new literacy rather than a forbidden shortcut.

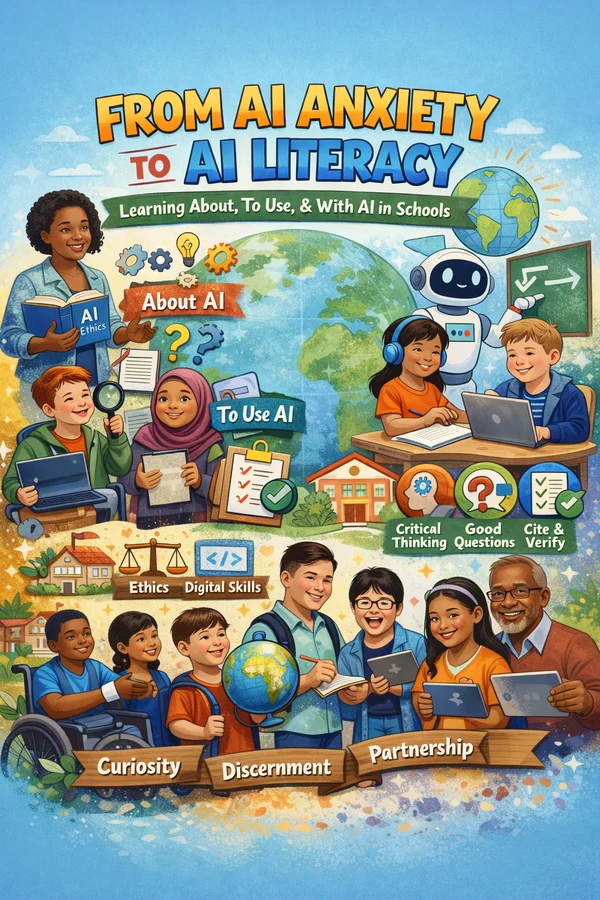

When I talk about “teaching AI,” I’m not talking about letting a bot do a child’s work. I’m talking about teaching discernment in an AI world, including how to ask better questions, recognize when an answer is incomplete or wrong, verify sources, and decide what not to accept. Discernment is the human skill that turns a powerful tool into a meaningful partner. Without it, AI becomes a shortcut. With it, AI becomes a mirror for thinking, a catalyst for curiosity, and a way to deepen understanding rather than replace it. At its best, teaching AI is about teaching judgment.

At the heart of discernment is the ability to ask good questions. AI reflects the quality of the prompt it is given. A vague question produces a shallow answer. A thoughtful prompt invites depth, nuance, and possibility. This is where learning lives. When students learn to shape a question, refine it, and push back on an answer, they are practicing the very skills education has always claimed to value: clarity, curiosity, iteration, and critical thinking. Prompting is not a trick. It is a modern inquiry.

This philosophy is the foundation of my book, Family Adventures from A to Z Using ChatGPT. The book invites families to explore AI together, not as a homework machine, but as a thinking partner. One that sparks stories, questions, projects, and conversations across generations. The goal is simple: move from AI anxiety to AI partnership. When families learn alongside children, curiosity replaces fear, and technology becomes a bridge for connection rather than a barrier.

“Teaching AI” can mean very different things. It can mean learning about AI, how it works, where it fails, and the ethical questions it raises. It can mean learning to use AI, how to prompt, verify, and cite responsibly. And it can mean learning with AI, as a tutor, brainstorming partner, writing coach, or scaffold for different learners. The strongest models weave all three together. They are guided by clear policies around privacy, bias, and academic integrity. Teachers are trained before students are turned loose. Assignments are redesigned so thinking and process matter as much as speed.

Across the world, AI is no longer a fringe experiment, it is becoming a system-level priority. Countries such as Estonia and Singapore are embedding AI directly into national education strategies. Australia has linked AI to its curriculum. Beijing now requires instructional hours in AI. India plans to introduce AI and computational thinking in the early grades. Japan and the UK lead with national guardrails that enable responsible use.

In the United States, progress is uneven. States like California are building frameworks, while districts such as Houston are redesigning schools to focus on future-ready skills, including AI and design thinking. Momentum is real—but far from uniform.

What these examples share is a recognition that AI literacy cannot be left to chance or home privilege. But they also reveal real risks. South Korea’s troubled rollout of AI-powered textbooks reminds us of what happens when speed outpaces design.

This moment in education is not about choosing sides for or against AI. It is about choosing whether we will lead with fear or with purpose. Control doesn’t come from blocking tools. It comes from understanding them. The systems that are getting this right are not chasing novelty. They are teaching discernment.

AI isn’t going away. Our responsibility is to ensure that the next generation doesn’t just use it—but understands it, questions it, and shapes it. That is not a technology problem. It is an educational opportunity.